When Extraction Becomes Ambient

On Contaminating Surveillance With Play, Love, and Radical Imagination

I live in Milan and the Milano Cortina Olympics have just ended. For three weeks, over 800 cameras and drones, dubbed as “The New Vuvuzelas”, have captured every moment for media coverage. Hundreds more have surveilled for security. Milan’s public space has become an entirely watched space. What remains after the Games isn’t only sports infrastructure: Olympic security investments have historically outlived the event, embedding enhanced surveillance capacities into host cities.

If surveillance becomes ambient, what kinds of contamination are still possible from within it?

When the Watcher Becomes Player

Last week, I happened to attend the latest CIFRA meetup, the digital art streaming platform. Two artists who question surveillance systems were there.

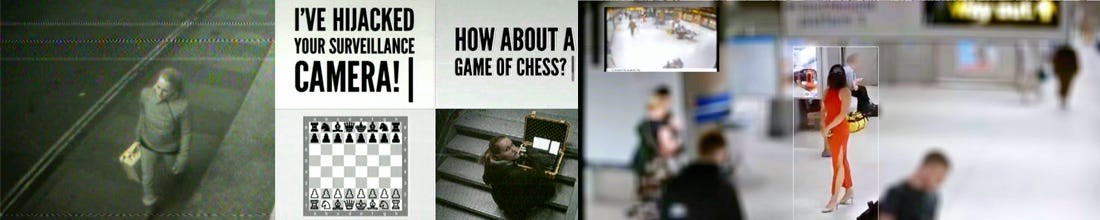

Mediengruppe Bitnik created Surveillance Chess during the 2012 London Olympics. A woman with a yellow suitcase enters a London tube station under CCTV surveillance. She opens the suitcase, flips a switch. The surveillance feed shifts. On the control centre monitor: a chessboard appears. A voice broadcasts through loudspeakers: “I control your camera now. I’m the one with the yellow suitcase. How about a game of chess? You’re white. I’m black. Call or text me to make your move.” By intercepting the unencrypted signal between camera and control centre, Bitnik forces reciprocity where there had only been a unilateral gaze. The watcher becomes player. The monitoring system becomes a communication medium. The game begins. Open-ended.

The other artist, OONA created Dear David: I’ve Been Looking For You (2025). She performs in the London tube, precisely notes what she’s wearing and her movements, then legally requests her CCTV footage under the UK Data Protection Act. To retreive her footage she needs to request them to the Transport for London employee who’s been processing these requests for 29 years, David. OONA uses her own surveilled body as raw material to create an imaginary relationship with the gaze watching her. David remains invisible and anonymous. What she does is project affect onto a bureaucratic apparatus, searching for the human in the machine without ever really reaching it. The “love letter” addresses a function as much as a person, introducing intimacy into a channel designed for administrative indifference.

The setup reminds me of Faceless (2007) by Austrian artist Manu Luksch, who made a science fiction film entirely from CCTV images of the London tube (with Tilda Swinton’s sublime voice), retrieved through the same right-to-image process. Maybe even via the same David given the time he’s been working there! I participated in a workshop with Luksch few years later. The goal was to perform under public surveillance cameras in Paris, hack the footage, to assemble a short scene. Except my group accidentally hacked a baby monitor’s video signal and discovered a sweatshop in a Parisian basement… Surveilling the surveillant doesn’t always go as planned. Parenthesis closed.

In 2026, Bitnik’s technique is technically obsolete. Facial recognition, real-time AI, encrypted streams have changed everything. But the gesture remains: creating spaces of reciprocity in architectures designed to eliminate them.

From Panopticon to Ambient Extraction

If this feels important to me today it’s because beyond Foucault’s panopticon, we’ve tipped into a strange paradox.

Online, we're retreating, building what Maggie Appleton calls "the cozy web": private Discord servers, Slack channels, dark forests where conversation can be "depressurised" away from the indexed, optimised, gamified mainstream. But whilst we hide our posts behind digital walls, our bodies are being harvested in physical space.

Strategist Jasmine Bina precisely names what’s happening in Repricing the Human Experience: ambient extraction. She recalls a personal experience where masked First Amendment Auditors (people who film in public spaces claiming constitutional rights, often monetising the footage) filmed her at her nail salon to generate paid content online. She didn't click anything, download anything, engage with anything. She just existed in the frame. "One side has masks—legal entities, anonymous usernames, literal hoods—while the rest is exposed," she writes. The more confrontation a video provokes, the more engagement it generates, and the more the platform pays. The asymmetry IS the business model.

This is happening in a context where filming others has never been more accessible. The smart glasses market exploded last year +110% global shipments in the first half of 2025 according to BofxMckinsey And last week, the New York Times reported that Meta wants to integrate facial recognition into its glasses. It reminds me of Lilia Hassaine’s novel Panorama (2021), where she imagines a city with transparent walls. Everyone sees everyone, constantly. Permanent exposure destroys the intimate, and total transparency manufactures monsters. The Meta glasses monsters of our era already have a name “glassholes”. On top of that, the AI-enhanced glasses are not just filming, they’re shifting our perception of reality between what’s real and what isn’t. That’s what led a man to wander the desert searching for aliens after buying Meta’s AI glasses… that’s the world we live in!

Which brings me to the other half of the loop. Worldcoin (Sam Altman’s other venture) just landed in Rome and opened a pop-up in Barcelona. It has shifted from the B2C model used in Latin America, where cryptocurrency was exchanged for iris scans, to B2B corporate authentication services. In Kenya, they had to erase their entire biometric database after legislation changed, but the European rollout continues anyway. So OpenAI floods the world with generative content that makes it impossible to verify what’s real, then Worldcoin sells biometric proof of humanness. Classic Volvo paradox (manufacture the danger, sell the safety equipment) except this isn’t seatbelts. It is bodily extraction. Your flesh becomes the credential that grants you existence within the system.

Affirmative Ethics and Radical Imagination

If all of this sounds pretty gloomy to you, let me bring a glimmer of hope. I was listening to an excellent interview of philosopher Prof. Em. Rosi Braidotti about Affirmative Ethics. She calls this a training in thinking through the conditions of one’s bondage. Affirmative ethics does not deny the apparatus. It maps it. It asks where activation is still possible within it. Not outside the system, but inside its constraints.

Surveillance produces asymmetry as structure. Affirmative ethics looks for the margins where that structure can be altered. Different people, different nodes. The organisers, the lawyers, the builders of alternative infrastructure, the artists making certain logics visible. When Bitnik intercepts a CCTV signal to play chess, when OONA writes love letters to David, when Portland protesters use facial recognition to identify police who removed their name tags, when artists poison AI training datasets with images that make models collapse, they’re creating what Braidotti calls “possibility margins.” Openings. Contaminations. Proof that the system’s logic isn’t total, isn’t inevitable.

I’m writing from Milan. That’s my window on this assemblage. I’m not giving surveillance lessons to people patrolling Minneapolis streets against ICE; they’re operating at a scale I can barely comprehend. What I can hold open from here is examples of radical imagination under constraint.

The Olympics have ended. These examples show how reciprocity can be inserted back into that circuit. Local. Repeated. Trying to alter how the system behaves.

Nice piece, as always! It makes me think about a few things: Dries Van Depoorter who did the Flemish scroller project, where he used public webcams of the belgium government to track when they use their phones during the debates, or the old project of CV Dazzle, coming back to life nowadays with the recent development of surveillance systems, or this sweater with a pattern creative an adversarial attack on facial recognition algorithms. It looks like artists might be the only answer right now to this massive adoption..

Great piece full of insights. Tx Ryslaine